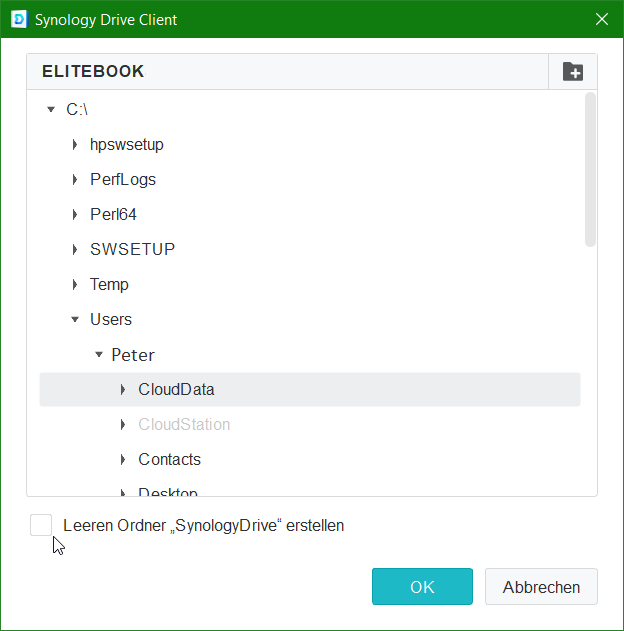

Synology drive client setup5/1/2023

Main Storage: INTEL SSDPEK1A118GA 118GB L2ARC

Main Storage: INTEL SSDPEK1A058GA 58GB x2 SLOG Main Storage: HGST HUH728080ALE604 8TB x2 Main Storage: HGST HUH728080ALN600 8TB x5 Jail Pool: INTEL SSDPEK1A118GA 118GB L2ARC (metadata only) Memory: Kingston - ValueRAM 16GB (1 x 16GB) DDR4-2133 Memory KVR21E15D8/16I x3īoot Pool: Intel - SSD DC S3500 Series 80GB 2.5" Solid State Drive x2 Memory: Kingston - ValueRAM 16GB (1 x 16GB) DDR4-2133 Memory KVR21E15D8/16 Motherboard: ASRock - E3C236D4U Micro ATX LGA1151 Motherboard Time to work on my Google (well, DuckDuckGo)-fu and see if I'm headed in the right direction!! Looking forward to learning a thing or two from you lot!ĬPU: Intel - Core i3-6100 3.7GHz Dual-Core Processor Hopefully with all that sorted out, I'll build my two systems to replace both Synology units but one step at a time, of course. I'm guessing I should be installing the OS on the RAIDZ2 drives rather than a non-redundant boot SSD but I guess further research will reveal the better way to go there. freebie) VMs (I'm hoping the Nakivo application will satisfy that)ģ) Calendar (as a central repo for my phone to sync/backup its calendar to)Ĥ) Log Center (seems to tick the syslog server box)Īs the Synology units are RS820+ and RS815+ (RAID6), I'm sticking to the 1RU form factor with Supermicro hardware and aiming for RAIDZ2. In any case, I'm continuing to do my homework with TrueNAS SCALE, with the hope that it will basically satisfy three application requirements beyond file sharing:ġ) HyperBackup equivalent (seems to tick that box)Ģ) Active Backup for Business for backing up live VMware ESXi Hypervisor (i.e. Hopefully I'm not being naive in understanding that ZFS would have a better chance at recovering from such a situation rather than forever banish the volume to read-only until it is destroyed. I have backups, so it isn't catastrophic but it is cumbersome to have to remove and recreate the volume, then restore the data, as well as re-establish the NFS permissions, etc. The BTRFS error was that upon the fourth corrupt leaf, it turned the volume into read-only. While they have served me well in that time, my recent experience with a secondary BTRFS volume being lost to read-only status after a kernel panic (caused by the Synology Drive indexer working on the other volume, which remained intact) has left me thinking that ZFS is going to be more resilient than that.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed